Apache Beam: A Basic Guide

Apache Beam simplifies large-scale data processing dynamics. Let’s read more about the features, basic concepts, and the fundamentals of Apache beam.

Table of Contents

- What is Apache Beam?

- Features of Apache Beam

- Apache Beam SDKs and Runners

- Basic Concepts in Apache Beam

- Example of Pipeline

What is Apache Beam?

We'll talk about Apache Beam in this guide and discuss its fundamental concepts. We will begin by showing the features and advantages of using Apache Beam, and then we will cover basic concepts and terminologies.

Ever since the concept of big data got introduced to the programming world, a lot of different technologies, frameworks have emerged. The processing of data can be categorized into two different paradigms. One is Batch Processing, and the other is Stream Processing.

Different technologies came into existence for different paradigms, solving various big data world problems, for, e.g., Apache Spark, Apache Flink, Apache Storm, etc.

As a developer or a business, it's always challenging to maintain different tech stacks and technologies. Hence, Apache Beam to the rescue!

What is Apache Beam?

Apache Beam is an open source, centralised model for describing parallel-processing pipelines for both batch and streaming data. The programming model of the Apache Beam simplifies large-scale data processing dynamics.

The Apache Beam model offers helpful abstractions that insulate you from distributed processing information at low levels, such as managing individual staff, exchanging databases, and other activities. These low-level information are handled entirely by Dataflow.

Features of Apache Beam

The unique features of Apache beam are as follows:

- Unified - Use a single programming model for both batch and streaming use cases.

- Portable - Execute pipelines in multiple execution environments. Here, execution environments mean different runners. Ex. Spark Runner, Dataflow Runner, etc

- Extensible - Write custom SDKs, IO connectors, and transformation libraries.

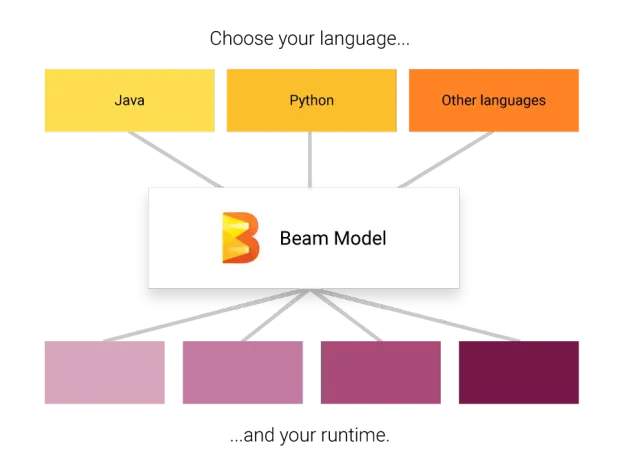

Apache Beam SDKs and Runners

As of today, there are 3 Apache beam programming SDKs

- Java

- Python

- Golang

Beam Runners translate the beam pipeline to the API compatible backend processing of your choice. Beam currently supports runners that work with the following backends.

- Apache Spark

- Apache Flink

- Apache Samza

- Google Cloud Dataflow

- Hazelcast Jet

- Twister2

Direct Runner to run on the host machine, which is used for testing purposes.

Basic Concepts in Apache Beam

Apache Beam has three main abstractions. They are

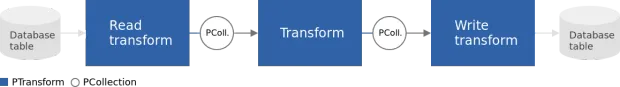

- Pipeline

- PCollection

- PTransform

Pipeline:

A pipeline is the first abstraction to be created. It holds the complete data processing job from start to finish, including reading data, manipulating data, and writing data to a sink. Every pipeline takes in options/parameters that indicate where and how to run.

PCollection:

A pcollection is an abstraction of distributed data. A pcollection can be bounded, i.e., finite data, or unbounded, i.e., infinite data. The initial pcollection is created by reading data from the source. From then on, pcollections are the source and sink of every step in the pipeline.

Transform:

A transform is a data processing operation. A transform is applied on one or more pcollections. Complex transforms have other transform nested within them. Every transform has a generic apply method where the logic of the transform sits in.

Example of Pipeline

Here, let's write a pipeline to output all the jsons where the name starts with a vowel.

Let's take a sample input. Name the file as input.json

1{"name":"abhi", "score":12}

2{"name":"virat", "score":23}

3{"name":"dhoni", "score":45}

4{"name":"rahul", "score": 156}

5{"name": "Edmund"}

6{"name": "Ojha"}The input should be a newline delimited JSON.

Include the following dependencies in your pom.xml

1<dependency>

2 <groupId>org.apache.beam</groupId>

3 <artifactId>beam-sdks-java-core</artifactId>

4 <version>2.24.0</version>

5</dependency>

6<dependency>

7<groupId>org.apache.beam</groupId>

8<artifactId>beam-runners-direct-java</artifactId>

9<version>2.24.0</version>

10</dependency>Let's code the beam pipeline. Follow the steps

-

Create a pipeline.

1Pipeline pipeLine = Pipeline.create(); 2 // OR 3 // Pipeline pipeLine = Pipeline.create(options);Create a pipeline which binds all the pcollections and transforms. Optionally you can pass the PipelineOptions

optionsif needed. -

Read the input file

1PCollection<String> inputCollection = pipeLine.apply("Read My File", TextIO.read().from("input.json"));Use the

TextIOtransform to read the input files. Every line is a different json record. -

Apply a transform to filter out the names starting from a vowel

1PCollection filteredCollection = inputCollection.apply("Filter names starting with vowels", Filter.by(new SerializableFunction<String, Boolean>() { 2 public Boolean apply(String input) { 3 ObjectMapper jacksonObjMapper = new ObjectMapper(); 4 try { 5 JsonNode jsonNode = jacksonObjMapper.readTree(input); 6 String name = jsonNode.get("name").textValue(); 7 return vowels.contains(name.substring(0,1).toLowerCase()); 8 } catch (JsonProcessingException e) { 9 e.printStackTrace(); 10 } 11 return false; 12 } 13}))The filter transform takes a SerializableFunction Object where the

applymethod is overridden. Every json-string record is converted to a JSON. The first character of thenameis checked if it's a vowel. The transform is applied to each input JSON record. Based on the boolean value returned, the record is retained or discarded. -

Write the results to a file

1inputCollection.apply("write to file", TextIO.write().to("result").withSuffix(".txt").withoutSharding());The results of the

Filtertransform are stored in a text file using the write method of theTextIOtransform. As PCollections are distributed across machines, the results are written to multiple files/shards. To avoid this, we usewithoutShardingwhere all the output is written to a single file.

Output:

1{"name": "Edmund"}

2{"name": "Ojha"}

3{"name":"abhi", "score":12}Complete Code:

1Pipeline pipeLine = Pipeline.create();

2final Set<String> vowels = new HashSet<String>(Arrays.asList("a","e","i","o","u"));

3pipeLine.apply("Read My File",

4TextIO.read().from("input.json"))

5.apply("Filter names starting with vowels", Filter.by(new SerializableFunction<String, Boolean>() {

6 public Boolean apply(String input) {

7 ObjectMapper jacksonObjMapper = new ObjectMapper();

8 try {

9 JsonNode jsonNode = jacksonObjMapper.readTree(input);

10 String name = jsonNode.get("name").textValue();

11 return vowels.contains(name.substring(0,1).toLowerCase());

12 } catch (JsonProcessingException e) {

13 e.printStackTrace();

14 }

15 return false;

16 }

17 }))

18 .apply("write to file", TextIO.write().to("result").withSuffix(".txt").withoutSharding());

19

20pipeLine.run().waitUntilFinish();For more advanced concepts, refer to the official site - beam.apache.org

Featured Posts

TOTP Authentication Explained: How It Works, Why It’s Secure

Advantages of Time-Based One-Time Passwords (TOTP)

JWT Authentication with LoginRadius: Quick Integration Guide

Complete Guide to JSON Web Token (JWT) and How It Works

A comprehensive guide to OAuth 2.0

How Chrome’s Third-Party Cookie Restrictions Affect User Authentication?

How to Implement OpenID Connect (OIDC) SSO with LoginRadius?

Testing Brute-force Lockout with LoginRadius

Breaking Down the Decision: Why We Chose AWS ElastiCache Over Redis Cloud

LoginRadius Launches a CLI for Enterprise Dashboard

How to Implement JWT Authentication for CRUD APIs in Deno

Multi-Factor Authentication (MFA) with Redis Cache and OTP

Introduction to SolidJS

Why We Re-engineered LoginRadius APIs with Go?

Why B2B Companies Should Implement Identity Management

Top 10 Cyber Threats in 2022

Build a Modern Login/Signup Form with Tailwind CSS and React

M2M Authorization: Authenticate Apps, APIs, and Web Services

Implement HTTP Streaming with Node.js and Fetch API

NestJS: How to Implement Session-Based User Authentication

How to Integrate Invisible reCAPTCHA for Bot Protection

How Lapsus$ Breached Okta and What Organizations Should Learn

NestJS User Authentication with LoginRadius API

How to Authenticate Svelte Apps

How to Build Your Github Profile

Why Implement Search Functionality for Your Websites

Flutter Authentication: Implementing User Signup and Login

How to Secure Your LoopBack REST API with JWT Authentication

When Can Developers Get Rid of Password-based Authentication?

4 Ways to Extend CIAM Capabilities of BigCommerce

Node.js User Authentication Guide

Your Ultimate Guide to Next.js Authentication

Local Storage vs. Session Storage vs. Cookies

How to Secure a PHP API Using JWT

React Security Vulnerabilities and How to Fix/Prevent Them

Cookie-based vs. Cookieless Authentication: What’s the Future?

Using JWT Flask JWT Authentication- A Quick Guide

Single-Tenant vs. Multi-Tenant: Which SaaS Architecture is better for Your Business?

Build Your First Smart Contract with Ethereum & Solidity

What are JWT, JWS, JWE, JWK, and JWA?

How to Build an OpenCV Web App with Streamlit

32 React Best Practices That Every Programmer Should Follow

How to Build a Progressive Web App (PWA) with React

Bootstrap 4 vs. Bootstrap 5: What is the Difference?

JWT Authentication — Best Practices and When to Use

What Are Refresh Tokens? When & How to Use Them

How to Participate in Hacktoberfest as a Maintainer

How to Upgrade Your Vim Skills

Hacktoberfest 2021: Contribute and Win Swag from LoginRadius

How to Implement Role-Based Authentication with React Apps

How to Authenticate Users: JWT vs. Session

How to Use Azure Key Vault With an Azure Web App in C#

How to Implement Registration and Authentication in Django?

11 Tips for Managing Remote Software Engineering Teams

One Vision, Many Paths: How We’re Supporting freeCodeCamp

C# Init-Only Setters Property

Content Security Policy (CSP)

Implementing User Authentication in a Python Application

Introducing LoginRadius CLI

Add Authentication to Play Framework With OIDC and LoginRadius

React renderers, react everywhere?

React's Context API Guide with Example

Implementing Authentication on Vue.js using JWTtoken

How to create and use the Dictionary in C#

What is Risk-Based Authentication? And Why Should You Implement It?

React Error Boundaries

Data Masking In Nginx Logs For User Data Privacy And Compliance

Code spliting in React via lazy and suspense

Implement Authentication in React Applications using LoginRadius CLI

What is recoil.js and how it is managing in react?

How Enum.TryParse() works in C#

React with Ref

Implement Authentication in Angular 2+ application using LoginRadius CLI in 5 mins

How Git Local Repository Works

How to add SSO for your WordPress Site!

Guide to Authorization Code Flow for OAuth 2.0

Introduction to UniFi Ubiquiti Network

The Upcoming Future of Software Testers and SDETs in 2021

Why You Need an Effective Cloud Management Platform

What is Adaptive Authentication or Risk-based Authentication?

Top 9 Challenges Faced by Every QA

Top 4 Serverless Computing Platforms in 2021

QA Testing Process: How to Deliver Quality Software

How to Create List in C#

What is a DDoS Attack and How to Mitigate it

How to Verify Email Addresses in Google Sheet

Concurrency vs Parallelism: What's the Difference?

35+ Git Commands List Every Programmer Should Know

How to do Full-Text Search in MongoDB

What is API Testing? - Discover the Benefits

The Importance of Multi-Factor Authentication (MFA)

Optimize Your Sign Up Page By Going Passwordless

Image Colorizer Tool - Kolorizer

PWA vs Native App: Which one is Better for you?

How to Deploy a REST API in Kubernetes

Integration with electronic identity (eID)

How to Work with Nullable Types in C#

Git merge vs. Git Rebase: What's the difference?

How to Install and Configure Istio

How to Perform Basic Query Operations in MongoDB

Invalidating JSON Web Tokens

How to Use the HTTP Client in GO To Enhance Performance

Constructor vs getInitialState in React

Web Workers in JS - An Introductory Guide

How to Use Enum in C#

How to Migrate Data In MongoDB

A Guide To React User Authentication with LoginRadius

WebAuthn: A Guide To Authenticate Your Application

Build and Push Docker Images with Go

Istio Service Mesh: A Beginners Guide

How to Perform a Git Force Pull

NodeJS Server using Core HTTP Module

How does bitwise ^ (XOR) work?

Introduction to Redux Saga

React Router Basics: Routing in a Single-page Application

How to send emails in C#/.NET using SMTP

How to create an EC2 Instance in AWS

How to use Git Cherry Pick

Password Security Best Practices & Compliance

Using PGP Encryption with Nodejs

Python basics in minutes

Automating Rest API's using Cucumber and Java

Bluetooth Controlled Arduino Car Miniature

AWS Services-Walkthrough

Beginners Guide to Tweepy

Introduction to Github APIs

Introduction to Android Studio

Login Screen - Tips and Ideas for Testing

Introduction to JAMstack

A Quick Look at the React Speech Recognition Hook

IoT and AI - The Perfect Match

A Simple CSS3 Accordion Tutorial

EternalBlue: A retrospective on one of the biggest Windows exploits ever

Setup a blog in minutes with Jekyll & Github

What is Kubernetes? - A Basic Guide

Why RPA is important for businesses

Best Hacking Tools

Three Ways to do CRUD Operations On Redis

Traversing the realms of Quantum Network

How to make a telegram bot

iOS App Development: How To Make Your First App

Apache Beam: A Basic Guide

Python Virtual Environment: What is it and how it works?

End-to-End Testing with Jest and Puppeteer

Speed Up Python Code

Build A Twitter Bot Using NodeJS

Visualizing Data using Leaflet and Netlify

STL Containers & Data Structures in C++

Secure Enclave in iOS App

Optimal clusters for KMeans Algorithm

Upload files using NodeJS + Multer

Class Activation Mapping in Deep Learning

Full data science pipeline implementation

HTML Email Concept

Blockchain: The new technology of trust

Vim: What is it and Why to use it?

Virtual Dispersive Networking

React Context API: What is it and How it works?

Breaking down the 'this' keyword in Javascript

Handling the Cheapest Fuel- Data

GitHub CLI Tool ⚒

Lazy loading in React

What is GraphQL? - A Basic Guide

Exceptions and Exception Handling in C#

Unit Testing: What is it and why do you need it?

Golang Maps - A Beginner’s Guide

LoginRadius Open Source For Hacktoberfest 2020

JWT Signing Algorithms

How to Render React with optimization

Ajax and XHR using plain JS

Using MongoDB as Datasource in GoLang

Understanding event loop in JavaScript

LoginRadius Supports Hacktoberfest 2020

How to implement Facebook Login

Production Grade Development using Docker-Compose

Web Workers: How to add multi-threading in JS

Angular State Management With NGXS

What's new in the go 1.15

Let’s Take A MEME Break!!!

PKCE: What it is and how to use it with OAuth 2.0

Big Data - Testing Strategy

Email Verification API (EVA)

Implement AntiXssMiddleware in .NET Core Web

Setting Up and Running Apache Kafka on Windows OS

Getting Started with OAuth 2.0

Best Practice Guide For Rest API Security | LoginRadius

Let's Write a JavaScript Library in ES6 using Webpack and Babel

Cross Domain Security

Best Free UI/UX Design Tools/Resources 2020

A journey from Node to GoLang

React Hooks: A Beginners Guide

DESIGN THINKING -A visual approach to understand user’s needs

Deep Dive into Container Security Scanning

Different ways to send an email with Golang

Snapshot testing using Nightwatch and mocha

Qualities of an agile development team

IAM, CIAM, and IDaaS - know the difference and terms used for them

How to obtain iOS application logs without Mac

Benefits and usages of Hosts File

React state management: What is it and why to use it?

HTTP Security Headers

Sonarqube: What it is and why to use it?

How to create and validate JSON Web Tokens in Deno

Cloud Cost Optimization in 2021

Service Mesh with Envoy

Kafka Streams: A stream processing guide

Self-Hosted MongoDB

Roadmap of idx-auto-tester

How to Build a PWA in Vanilla JS

Password hashing with NodeJS

Introduction of Idx-Auto-Tester

Twitter authentication with Go Language and Goth

Google OAuth2 Authentication in Golang

LinkedIn Login using Node JS and passport

Read and Write in a local file with Deno

Build A Simple CLI Tool using Deno

Create REST API using deno

Automation for Identity Experience Framework is now open-source !!!

Creating a Web Application using Deno

Hello world with Deno

Facebook authentication using NodeJS and PassportJS

StackExchange - The 8 best resources every developer must follow

OAuth implementation with Node.js and Github

NodeJS and MongoDB application authentication by JWT

Working with AWS Lambda and SQS

Google OAuth2 Authentication in NodeJS - A Guide to Implementing OAuth in Node.js

Custom Encoders in the Mongo Go Driver

React's Reconciliation Algorithm

NaN in JavaScript: An Essential Guide

SDK Version 10.0.0

Getting Started with gRPC - Part 1 Concepts

Introduction to Cross-Site Request Forgery (CSRF)

Introduction to Web Accessibility with Semantic HTML5

JavaScript Events: Bubbling, Capturing, and Propagation

3 Simple Ways to Secure Your Websites/Applications

Failover Systems and LoginRadius' 99.99% Uptime

A Bot Protection Overview

OAuth 1.0 VS OAuth 2.0

Azure AD as an Identity provider

How to Use JWT with OAuth

Let's Encrypt with SSL Certificates

Encryption, Hashing & Salting: Your Guide to Secure Data

What is JSON Web Token

Understanding JSONP

Using NuGet to publish .NET packages

How to configure the 'Actions on Google' console for Google Assistant

Creating a Google Hangout Bot with Express and Node.js

Understanding End Of Line: The Power of Newline Characters

Cocoapods : What It Is And How To Install?

Node Package Manager (NPM)

Get your FREE SSL Certificate!

jCenter Dependencies in Android Studio

Maven Dependency in Eclipse

Install Bootstrap with Bower

Open Source Business Email Validator By Loginradius

Know The Types of Website Popups and How to Create Them

Javascript tips and tricks to Optimize Performance

Learn How To Code Using The 10 Cool Websites

Personal Branding For Developers: Why and How?

Wordpress Custom Login Form Part 1

Is Your Database Secured? Think Again

Be More Manipulative with Underscore JS

Extended LinkedIn API Usage

Angular Roster Tutorial

How to Promise

Learning How to Code

Delete a Node, Is Same Tree, Move Zeroes

CSS/HTML Animated Dropdown Navigation

Part 2 - Creating a Custom Login Form

Website Authentication Protocols

Nim Game, Add Digits, Maximum Depth of Binary Tree

The truth about CSS preprocessors and how they can help you

Beginner's Guide for Sublime Text 3 Plugins

Displaying the LoginRadius interface in a pop-up

Optimize jQuery & Sizzle Element Selector

Maintain Test Cases in Excel Sheets

Separate Drupal Login Page for Admin and User

How to Get Email Alerts for Unhandled PHP Exceptions

ElasticSearch Analyzers for Emails

Social Media Solutions

Types of Authentication in Asp.Net

Using Facebook Graph API After Login

Hi, My Name is Darryl, and This is How I Work

Beginner's Guide for Sublime Text 3

Social Network Branding Guidelines

Index in MongoDB

How to ab-USE CSS2 sibling selectors

Customize User Login, Register and Forgot Password Page in Drupal 7

Best practice for reviewing QQ app

CSS3 Responsive Icons

Write a highly efficient python Web Crawler

Memcached Memory Management

HTML5 Limitation in Internet Explorer

What is an API

Styling Radio and Check buttons with CSS

Configuring Your Social Sharing Buttons

Shopify Embedded App

API Debugging Tools

Use PHP to generate filter portfolio

Password Security

Loading spinner using CSS

RDBMS vs NoSQL

Cloud storage vs Traditional storage

Getting Started with Phonegap

Animate the modal popup using CSS

CSS Responsive Grid, Re-imagined

An Intro to Curl & Fsockopen

Enqueuing Scripts in WordPress

How to Implement Facebook Social Login

GUID Query Through Mongo Shell

Integrating LinkedIn Social Login on a Website

Social Provider Social Sharing Troubleshooting Resources

Social Media Colors in Hex

W3C Validation: What is it and why to use it?

A Simple Popup Tutorial

Hello developers and designers!